Still Night

Turning Starry Night into an instrument you can play.

An old question

What is the relationship between color and sound?

Arcimboldo mapped tones to luminosity in 1590. Newton matched seven colors to seven notes in 1704. Castel built a harpsichord that played colored glass. Each generation tried again with the tools they had.

Most assumed notes translated to hues. It doesn't work that way.

Ocular harpsichord

Mapping sound and color

Brightness, not hue

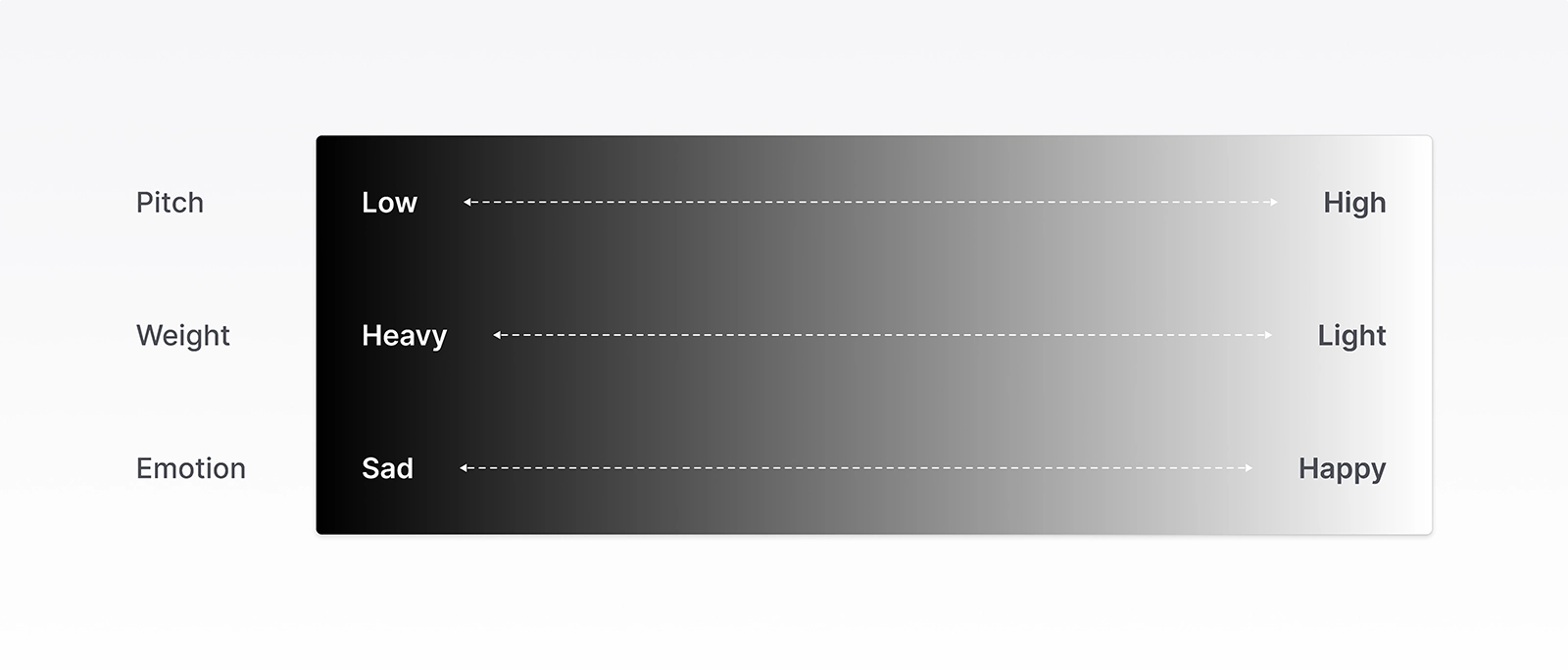

Hue was the wrong axis. Brightness was the right one.

Recent research found the strongest connection isn't color itself. It's brightness. Light colors make sound feel higher and lighter. Dark colors make sound feel lower and heavier. The pairing isn't learned. We're born with it.

Brightness does three things at once. It sets the pitch. It shapes the weight. It shifts the emotional character.

That's what Still Night is built on.

The brightness axis

Measuring Starry Night

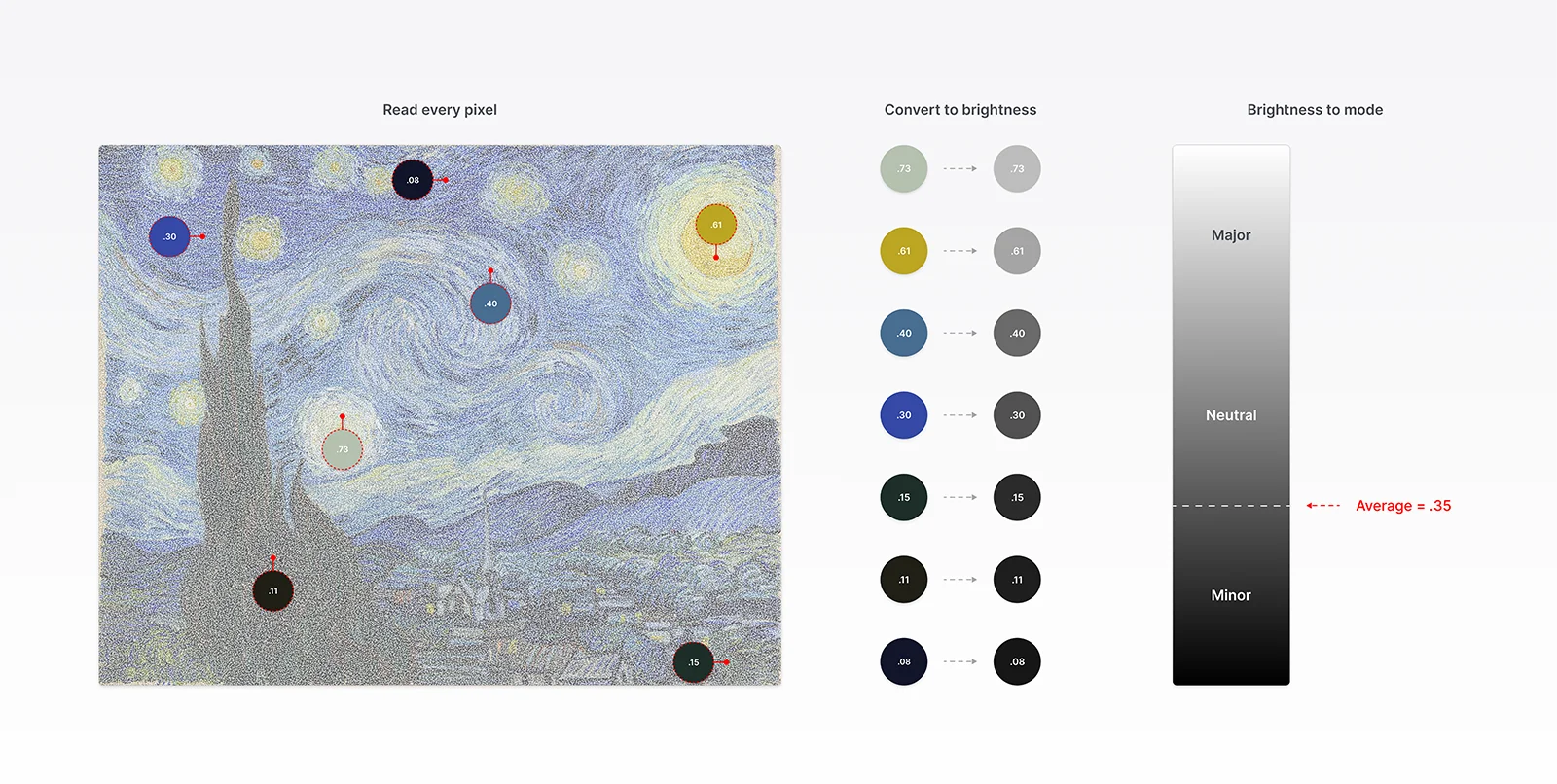

I built a tool that reads a painting the way the research does.

It measures brightness and applies the mapping. Starry Night came back in the darker half of the range. Minor mode. Anchored low, where a piano's middle-to-low keys sit.

The painting chose the sound.

Five zones, five sounds

Starry Night holds together as five zones. Cypress, village, horizon/swirls, sky, stars. Each one is its own visual idea. Each one became its own sound.

A segmentation model split the painting into regions. I grouped those regions into five zones. Another tool placed each zone in a register based on its brightness.

Where each sound falls in the register came from the research. Cypress at the bottom of the range, stars at the top. What each sound feels like came from me, translating the painting through my own expression, Van Gogh's writings, and the art historians who studied him.

Movement made it playable

Sound built the instrument. Movement made it playable.

I rebuilt the painting as points. Dark areas got fewer points, bright areas got more. Each point moves on its own. The image stayed intact.

Each zone got its own movement, read from the painting itself. The village pulses in place. Horizon flows along the brushstrokes. Stars rotate and pull nearby points into spirals. The sky drifts.

I built tools to mark the zones and direct the movement. Sound and your touch drive the visuals.

Shifting the mode

Starry Night measured dark. But what if it were brighter?

The research predicts the sound would shift too. The register rises. Mode shifts from minor to major. Color and motion follow.

I built the mode toggle to test it. The toggle holds the painting, the audio, and the visuals in one system and moves them along brightness together. The color palette adjusts per region.

Same painting. Different reading. Same system.

Why the early attempts failed

Audio came first. I tried composing music in G minor. I tried building a keyboard tuned to the painting. Each one played at the painting; none of them played with it.

Visuals followed. I tried procedural renderings, brush-stroke simulations, overlays of the painting itself. Each one looked closer than the last. None of them moved with the painting's own direction.

The painting and the sound had to listen to each other. Until they did, neither could be played.

What I learned

I built this in six weeks. I knew nothing about crossmodal research, particle systems, audio synthesis, or WebGL.

I work better with guardrails, and independent projects don't come with any. The research became the guardrails. It told me what to follow and what to leave alone.

LLMs helped me along the way. These tools can get you far, but not quite what you want. Still Night took countless iterations, compromises, and final judgment.